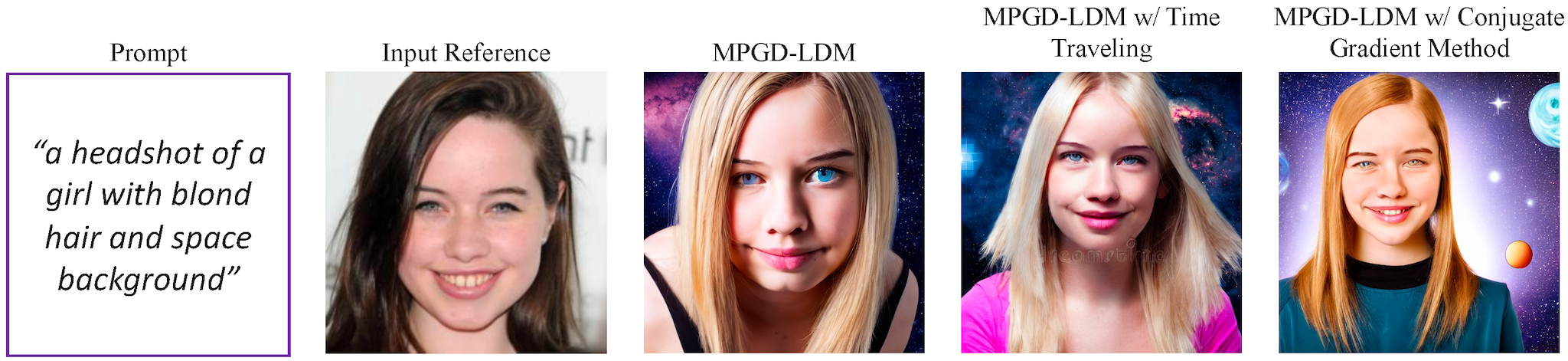

Despite the recent advancements, conditional image generation still faces challenges of cost, generalizability, and the need for task-specific training. In this paper, we propose Manifold Preserving Guided Diffusion (MPGD), a training-free conditional generation framework that leverages pretrained diffusion models and off-the-shelf neural networks with minimal additional inference cost for a broad range of tasks. Specifically, we leverage the manifold hypothesis to refine the guided diffusion steps and introduce a shortcut algorithm in the process. We then propose two methods for on-manifold training-free guidance using pre-trained autoencoders and demonstrate that our shortcut inherently preserves the manifolds when applied to latent diffusion models. Our experiments show that MPGD is efficient and effective for solving a variety of conditional generation applications in low-compute settings, and can consistently offer up to 3.8× speed-ups with the same number of diffusion steps while maintaining high sample quality compared to the baselines.

@inproceedings{

he2024manifold,

title={Manifold Preserving Guided Diffusion},

author={Yutong He and Naoki Murata and Chieh-Hsin Lai and Yuhta Takida and Toshimitsu Uesaka and Dongjun Kim and Wei-Hsiang Liao and Yuki Mitsufuji and J Zico Kolter and Ruslan Salakhutdinov and Stefano Ermon},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=o3BxOLoxm1}

}